VAL paved the way for all the inaccurate voice-activated menus that would plague callers for the next 15 years and beyond. The advent of the first voice portal, VAL from BellSouth, was in 1996 VAL was a dial-in interactive voice recognition system that was supposed to give you information based on what you said on the phone. However, you had to train the program for 45 minutes, and it was still expensive at $695. The application recognized continuous speech, so you could speak, well, naturally, at about 100 words per minute. Seven years later, the much-improved Dragon NaturallySpeaking arrived. In 1990, Dragon launched the first consumer speech recognition product, Dragon Dictate, for an incredible price of $9000. The ’70s also marked a few other important milestones in speech recognition technology, including the founding of the first commercial speech recognition company, Threshold Technology, as well as Bell Laboratories’ introduction of a system that could interpret multiple people’s voices.

(The story of speech recognition is very much tied to advances in search methodology and technology, as Google’s entrance into speech recognition on mobile devices proved just a few years ago.) Harpy was significant because it introduced a more efficient search approach, called beam search, to “prove the finite-state network of possible sentences,” according to Readings in Speech Recognition by Alex Waibel and Kai-Fu Lee. Harpy could understand 1011 words, approximately the vocabulary of an average three-year-old. The DoD’s DARPA Speech Understanding Research (SUR) program, from 1971 to 1976, was one of the largest of its kind in the history of speech recognition, and among other things it was responsible for Carnegie Mellon’s “Harpy” speech-understanding system. Speech recognition technology made major strides in the 1970s, thanks to interest and funding from the U.S. Biases have long plagued even the best systems, with a 2020 Stanford study finding systems from Amazon, Apple, Google, IBM and Microsoft made far fewer errors - about 19% - with users who are white than with users who are Black.They may not sound like much, but these first efforts were an impressive start, especially when you consider how primitive computers themselves were at the time. That last bit is nothing new to the world of speech recognition, unfortunately. Moreover, Whisper doesn’t perform equally well across languages, suffering from a higher error rate when it comes to speakers of languages that aren’t well-represented in the training data. Because the system was trained on a large amount of noisy data, OpenAI cautions that Whisper might include words in its transcriptions that weren’t actually spoken - possibly because it’s both trying to predict the next word in audio and transcribe the audio recording itself. Whisper has its limitations, though - particularly in the area of “next-word” prediction. According to a 2020 Statista survey, companies cite accuracy, accent- or dialect-related recognition issues and cost as the top reasons they haven’t embraced tech like tech-to-speech. To Brockman’s point, there’s plenty in the way of barriers when it comes to enterprises adopting voice transcription technology. It’s much, much faster and extremely convenient.” “The Whisper API is the same large model that you can get open source, but we’ve optimized to the extreme. “We released a model, but that actually was not enough to cause the whole developer ecosystem to build around it,” Brockman said in a video call with TechCrunch yesterday afternoon.

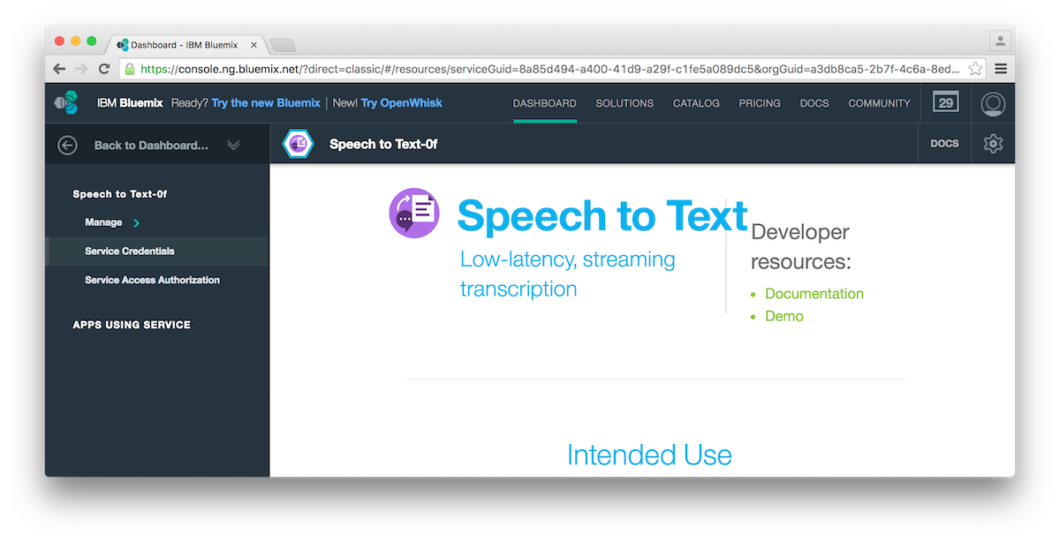

But what makes Whisper different is that it was trained on 680,000 hours of multilingual and “multitask” data collected from the web, according to OpenAI president and chairman Greg Brockman, which lead to improved recognition of unique accents, background noise and technical jargon. It takes files in a variety of formats, including M4A, MP3, MP4, MPEG, MPGA, WAV and WEBM.Ĭountless organizations have developed highly capable speech recognition systems, which sit at the core of software and services from tech giants like Google, Amazon and Meta. Priced at $0.006 per minute, Whisper is an automatic speech recognition system that OpenAI claims enables “robust” transcription in multiple languages as well as translation from those languages into English. To coincide with the rollout of the ChatGPT API, OpenAI today launched the Whisper API, a hosted version of the open source Whisper speech-to-text model that the company released in September.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed